Rumor mill: According to industry sources, Nvidia has shared its reference boards for the flagship RTX 4000-series GPUs, which might be called the RTX 4080, 4080 Ti, 4090, or 4090 Ti, with its OEM partners. It hasn't delivered the GPUs themselves yet, but the boards are geared to consume 600W of power.

Several leakers with good track records have recently said that they expect the RTX 4080 or 4090 to have a 600W TBP (total board power). A higher-end model, either the RTX 4090 Ti or some special edition card, could consume over 800W. But they've also attached several disclaimers to those numbers, noting that it's too early for exact details to have been finalized.

Igor's Lab reports that Nvidia has told OEMs to test the reference boards used to help them design their PCBs and coolers at the full 600W. Not every 4080 or 4090 will necessarily consume 600W out of the box, but at least some will turbo or overclock into that power bracket.

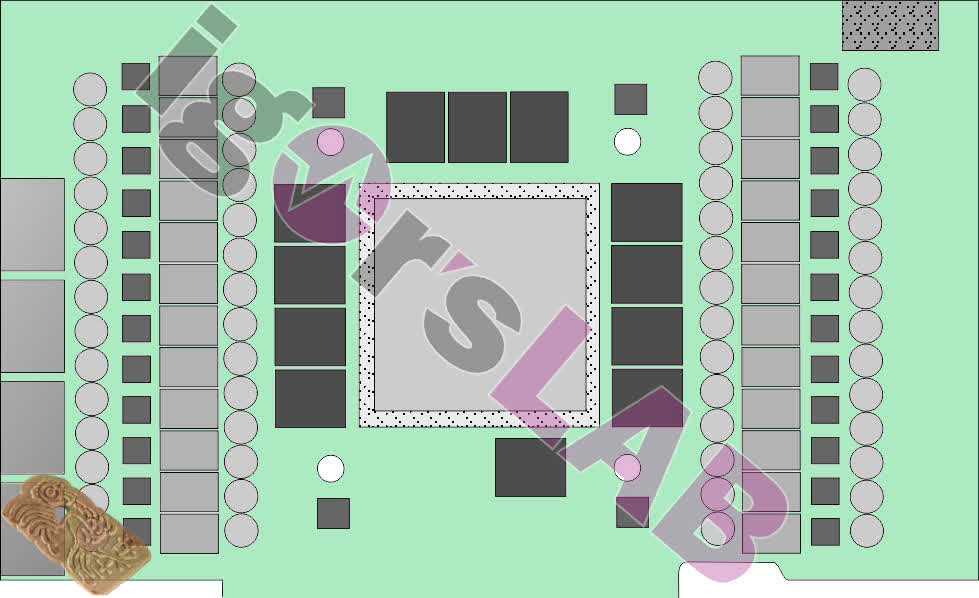

Igor's Lab received a picture of a reference board from a Chinese OEM and confirmed its authenticity with two other sources (above). One standout feature was the twelve slots for memory modules, which indicate that the GPU will have either 12 GB or 24 GB of GDDR6X memory, most likely the latter.

It also had an impressive 24 voltage converters, up from 20 on the Founder's Edition RTX 3090 PCB (shared between the GPU and memory). It used UPI Semi's UP9512 controllers capable of eight phases, so three controllers per phase.

All those converters and modules are fed by one 12VHPWR connector, also known as the PCIe 5.0 power plug, which can deliver 600W alone. Igor's Lab notes that OEMs are designing PCBs that use the full 600W, but, as mentioned, that's a ceiling, not an average. Each 12VHPWR connector will come with an adaptor with four 6+2 pin Molex plugs, even though most power supplies don't come with that many cables.

The insiders expect Nvidia to reuse the 3090's three-slot air cooler for the 4080 and 4090. Meanwhile, manufacturers are reportedly preparing 3.5-slot air coolers and considering water-cooled solutions similar to what AMD used for the RX 6900 XT LC.

In a potential first, the 3090 Ti and 4080 are allegedly pin-compatible, meaning they can use the same PCB designs. Igor's Lab speculates that OEMs might be using the 3090 Ti to test their 4080 coolers and PCBs. So the 3090 Ti and the 4080 might share parts.

At the very least, the 3090 Ti is the trial run for the 12VHPWR connector. Rumors indicate that the 3090 Ti will consume 400-500W depending on the model, a range that looks crazy compared to the RTX 3000-series but tame compared to the rumored figures for the RTX 4080 and 4090.

Image Credit: Thomas Foster

https://www.techspot.com/news/93940-nvidia-reportedly-making-600w-reference-boards-rtx-4090.html