A checksum is a number, in the form of a binary or hexadecimal value, that's been derived from a data source. The important bits to know are that it's typically much smaller than the source, and it's also almost entirely unique.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

What Is a Checksum, and What Can You Do With It?

- Thread starter neeyik

- Start date

Good topic as I'm doing Cryptography in school right now

Right now covering different cipher's and hashing

Right now covering different cipher's and hashing

PEnnn

Posts: 1,285 +1,853

arrowflash

Posts: 583 +696

Nice article, but I think that just as important as using checksums to verify downloaded files, maybe even more so, is using them to verify files copied between local storage devices, and between different computers in LANs.

I recommend that everyone should use programs that automatically verify checksums to copy files between devices (I.e. from local storage to an USB disk) or on a network. Most third party file copy and backup programs have this feature, but in many it's disabled by default and must be toggled on.

Hashing and comparing file / directory copying tasks to different devices or partitions should have become a standard feature on Windows and Linux with full GUI support, and enabled by default, a long time ago. The copy process will be about 15% ~ 35% slower but it gives peace of mind and can prevent serious headaches. Using this feature has saved me from future headaches a few times, when the copy program warned that checksums didn't match, due to corruption or because of antivirus meddling.

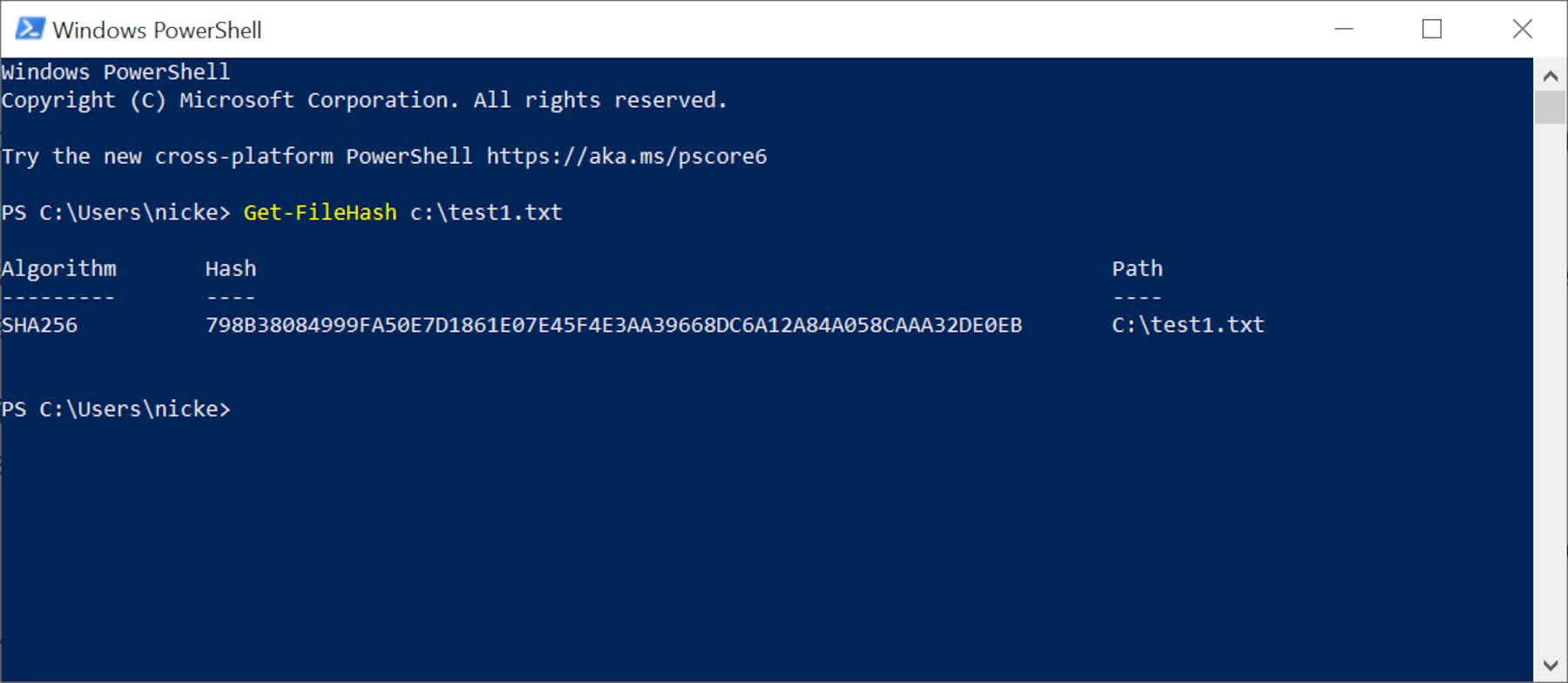

To automatically hash and compare files during copies, I use Teracopy; been using this program for almost 10 years, and in my machines I configure it to automatically replace the standard Windows file copy dialog. To just verify the checksum of a single file, or compare 2 existing files, Powershell works but if you want a GUI program there are many freeware programs that will do the job. My favorite is "CHK Hash Tool" - just open the program, drag the files into the window, and that's it (it automatically calculates the checksums). For more complex tasks, I'm sure most here have already heard of Winmerge - which not only compares files, but in case they aren't identical, it also can show how they are different.

When talking about backups, it's also very good practice to generate and keep a .csv / database with the checksums of all your backed up files. You know, in case the data in the backup media gets corrupt over time but not in a noticeable way (I.e. no errors during the backup restore process, etc). I've never done it myself out of laziness and lack of patience to deal with this, but it really is something I'd recommend doing.

I recommend that everyone should use programs that automatically verify checksums to copy files between devices (I.e. from local storage to an USB disk) or on a network. Most third party file copy and backup programs have this feature, but in many it's disabled by default and must be toggled on.

Hashing and comparing file / directory copying tasks to different devices or partitions should have become a standard feature on Windows and Linux with full GUI support, and enabled by default, a long time ago. The copy process will be about 15% ~ 35% slower but it gives peace of mind and can prevent serious headaches. Using this feature has saved me from future headaches a few times, when the copy program warned that checksums didn't match, due to corruption or because of antivirus meddling.

To automatically hash and compare files during copies, I use Teracopy; been using this program for almost 10 years, and in my machines I configure it to automatically replace the standard Windows file copy dialog. To just verify the checksum of a single file, or compare 2 existing files, Powershell works but if you want a GUI program there are many freeware programs that will do the job. My favorite is "CHK Hash Tool" - just open the program, drag the files into the window, and that's it (it automatically calculates the checksums). For more complex tasks, I'm sure most here have already heard of Winmerge - which not only compares files, but in case they aren't identical, it also can show how they are different.

When talking about backups, it's also very good practice to generate and keep a .csv / database with the checksums of all your backed up files. You know, in case the data in the backup media gets corrupt over time but not in a noticeable way (I.e. no errors during the backup restore process, etc). I've never done it myself out of laziness and lack of patience to deal with this, but it really is something I'd recommend doing.

Last edited:

kiwigraeme

Posts: 1,999 +1,410

I've used hashmyfiles-x64- seems to work quite well -I always assume google drive etc do some check automatically . I try and d/l from the primary source - but as we saw with CCleaner- downloads/depositories can be hacked and malicious code added.

gamerk2

Posts: 971 +984

Me (admittedly in the past): This is great, let me check the checksum! Only to cancel it 2 minutes later because it takes too much time.

To be fair, it depends on the algorithm. If you just need to perform verification of a file (and not security), then something relatively quick and simple (MD5?) should suffice.

I work on embedded systems, and we typically include the file checksum for our executables in a fixed address so we can verify the program before we flash it in (we check the checksum of the image against the value in this address prior to loading it). Keep in mind, in our case if we load a bum image it's a whole process to fix things, which takes several hours per unit.

Avro Arrow

Posts: 3,721 +4,821

I remember many years ago when "Checksum" was the original error-correcting algorithm for file transfers during the BBS days with the file-transfer protocol Xmodem. It was replaced by CRC in the SEALink protocol and then CRC-32 in Ymodem and Zmodem.

Similar threads

- Replies

- 28

- Views

- 299

- Replies

- 36

- Views

- 641

Latest posts

-

Nintendo DMCA lawyers shut down everything Mario on Garry's Mod

- mgilbert replied

-

This Japanese vending machine dispenses Intel Core CPUs for just $3.25 a pop

- whateversa replied

-

Researchers have unlocked the "Holy Grail" of memory technology

- LemmingO replied

-

HP elite pro desk 600 G5 sff PSU upgrade

- nic25276 replied

-

TechSpot is dedicated to computer enthusiasts and power users.

Ask a question and give support.

Join the community here, it only takes a minute.