Why it matters: Having taken the crown in the board game genre, DeepMind has shifted to something a little more ambitious. Gone are simple sets of rules, two dimensions, and defined grids to enter the madness of uncontrolled 3D movement, randomly generated maps, and teamwork. Given just a single metric, victory or defeat, DeepMind's "FTW" AI managed to safely secure victory after victory in a human tournament.

Even in a complex game such as Go, DeepMind was able to teach their AI, AlphaGo, a set of rules, possible moves, and what each position on the board means.

In the classic shooter Quake III's Capture the Flag mode, the AI was required to analyze the raw image sent through the display cable to figure out what the rules are and how to win. To match an "average human" it required 140,000 games, to match a "strong human" required 175,000, and when the researchers stopped at 450,000 training games the AI was significantly better than all human players.

Throughout the course of a tournament, human teams captured an average 16 fewer flags than AI pairs, and a pair of professional gamers able to talk to each other could only beat the AI 25% of the time after 12 hours' practice. To rub salt in the wound, the forty human players in the tournament rated the AI as being more cooperative than other human players. What does that say about us?

Despite never being told the rules, nor trained with a pre-made human game dataset, the AI taught itself much as a human would. After grasping the concepts that the AI had its own base, the enemy had one, too, and taking their flag back to yours won a point, and points mean victory, the AI slowly figured out how to kill enemy players and claim flags. Trained against offshoots of itself, it discovered basic strategies, like following the other player and camping the enemy spawn point. Much like a human though, it abandoned some strategies as it improved in favor of new ones, like self-defense.

The researchers built two layers into the AI, the 'thinking' layer, which was responsible for meta strategies, and the 'doing' layer, which interpreted those strategies into specific actions. It developed dedicated neurons for checking if it had the flag, if its teammate had the flag, if there was an enemy in sight, and where the enemy base was, for example.

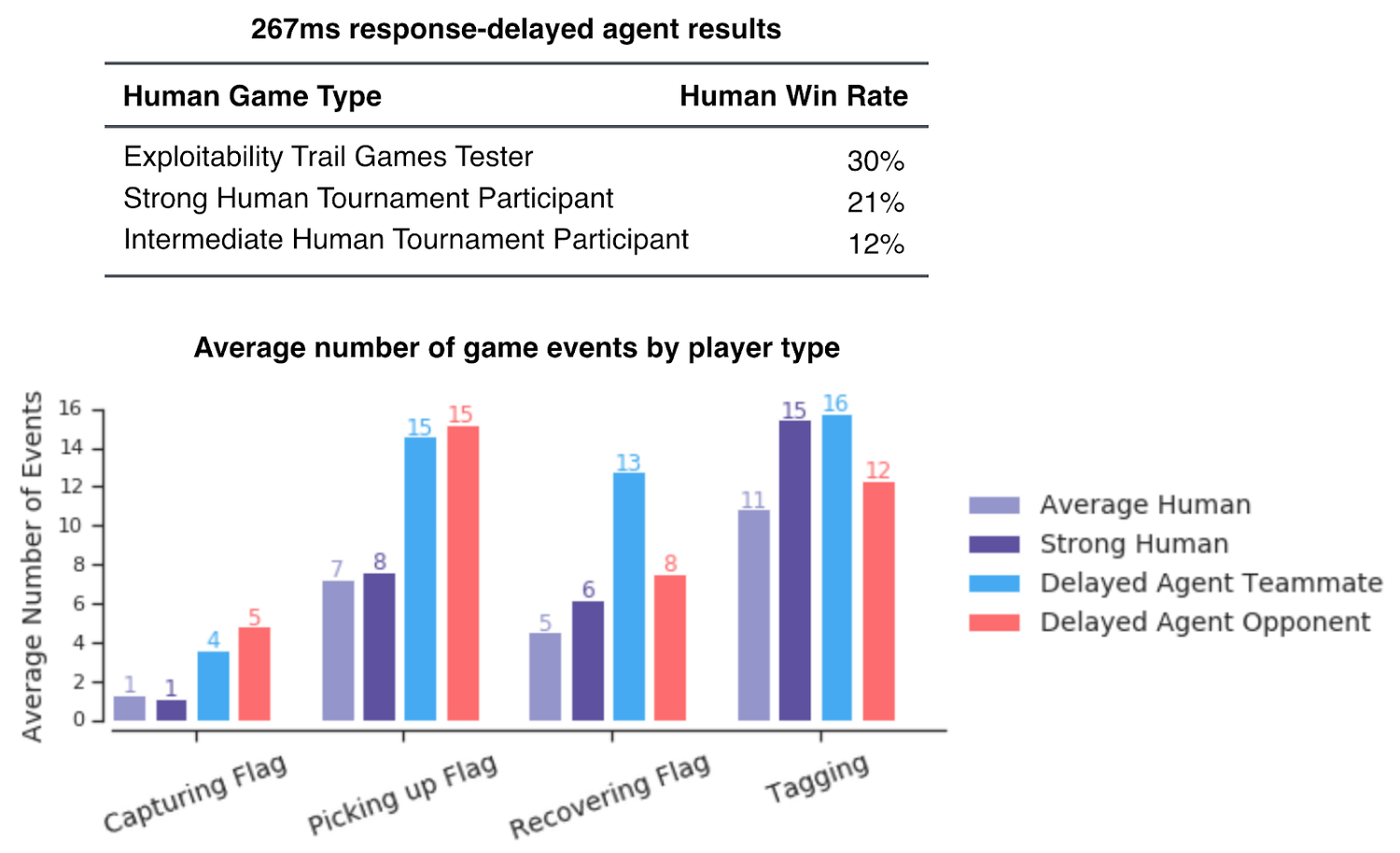

For the tournament, the researchers increased the AI's reaction time by 267 milliseconds, what they calculated as the average player's reaction time, and it made very little difference to the AI's performance. The AI originally had an accuracy of 80% as well, compared to human's 50%, but the researchers decreased that, too. Our AI overlords are just simply smarter than us.

One of the study's most interesting findings was that the best teammate combo was one human and one AI. Despite not being able to communicate, like a pair of humans, nor anticipate each other's moves as an AI would be expected to do, the unlikely duo had a 5% higher probability of winning than a pure AI pair.

This shows that the AI can still be developed further, though it's unclear what future training might entail. It's also interesting to note that while the AI was able to quickly beat players in Go and chess, it won by a much slimmer margin in Quake III. Humans will likely retain the edge in modern first-person shooters where environments, character classes, and weapon options further complicate strategies for some time.