WTF?! If you're interviewing someone via video call and notice they sneeze or cough without moving their face, you're probably interacting with a deepfake. The FBI is warning of an increase in the number of criminals using this method, combined with stolen Personally Identifiable Information (PII), in interviews for tech jobs that enable access to sensitive customer data.

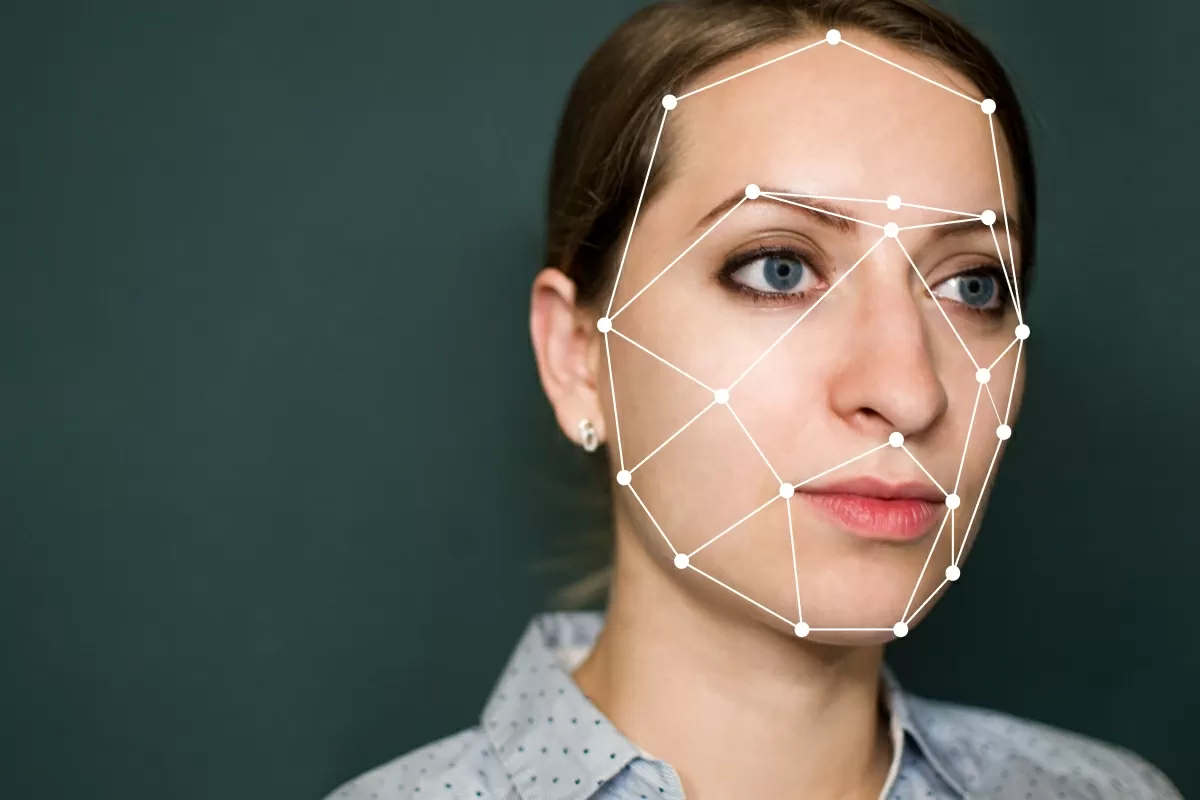

Deepfakes have been around for a few years now. They were originally used to place celebrities' faces on the bodies of adult actresses to create a type of AI-generated porn, which is banned on most platforms. The process has become increasingly advanced since then, leading to laws designed to protect against political and pornographic deepfakes and companies racing to find ways of identifying them.

The warning issued by the FBI states that more complaints are being received about deepfakes being used in interviews for remote or work-from-home positions, including those for information technology and computer programming, database, and software-related jobs.

The deepfakes include a video image or recording designed to misrepresent someone as a job applicant. To make themselves more convincing, these criminals often steal the PII of those they are imitating to pass any identity checks the potential employer performs.

The FBI writes that actions and lip movements often do not coordinate with the audio of the person speaking. "At times, actions such as coughing, sneezing, or other auditory actions are not aligned with what is presented visually," the agency said.

The ultimate goal is to be hired for the remote position, giving the impersonators access to customers' PII, financial data, corporate IT databases, and proprietary information, which is then stolen.

While deepfakes have been used to bring old photos back to life and return a young Mark Hamill to the screen, it has also been utilized for many unsavory purposes. James Cameron is especially worried; the director thinks Skynet could wipe out humanity using deepfake trickery.

Masthead: Mihai Surdu

https://www.techspot.com/news/95119-fbi-warns-more-criminals-using-deepfakes-remote-job.html