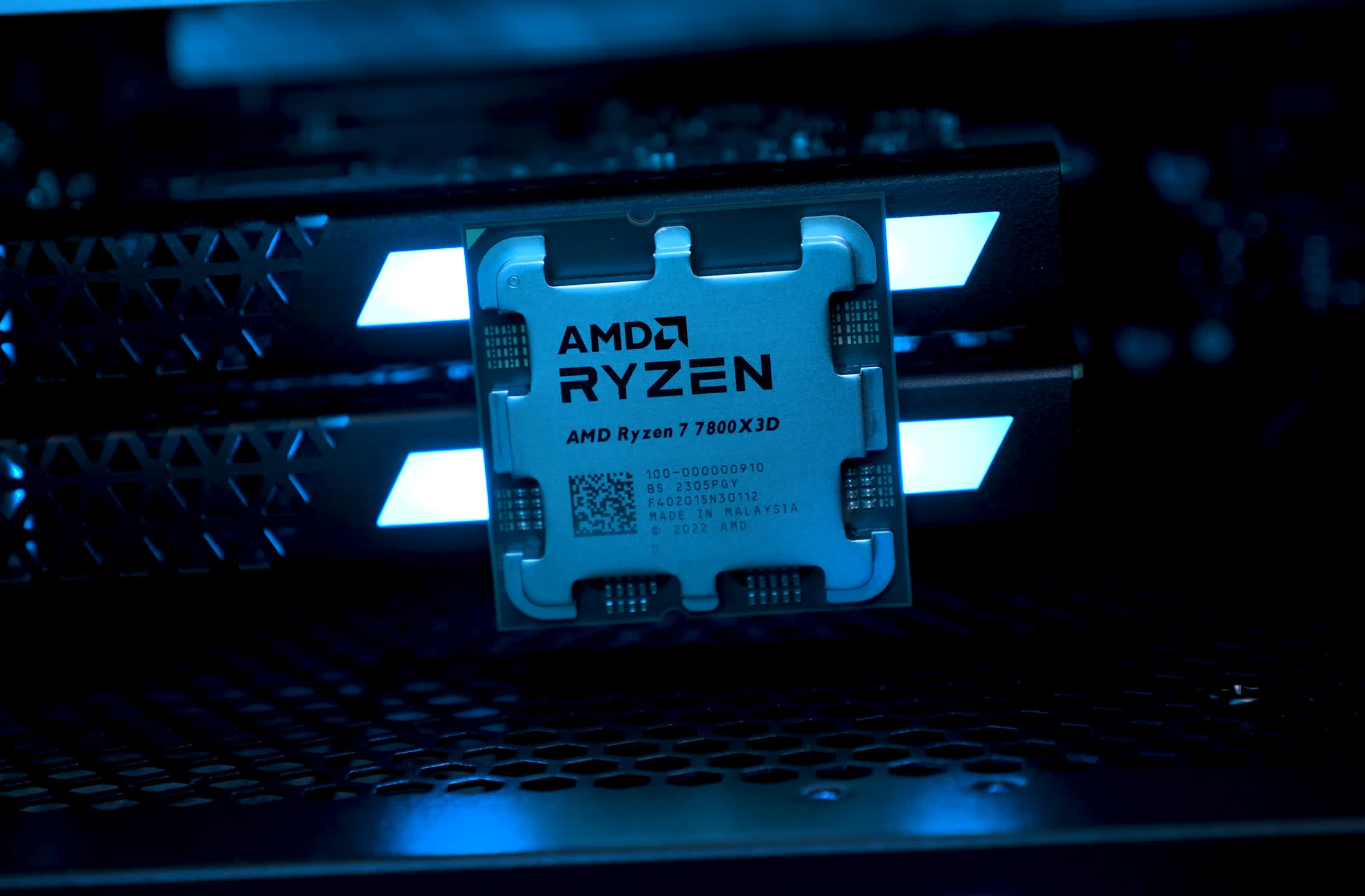

The Ryzen 7 7800X3D is the Zen 4 3D V-Cache CPU that gamers should all be interested in, it's fast and extremely power efficient. Moreover, at $450 the 7800X3D is just $50 more than the 7700X.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

AMD Ryzen 7 7800X3D Review: Gaming Efficiency FTW!

- Thread starter Steve

- Start date

This performs the same on those new A620 motherboards? If that is really the case then that is absolutely the best setup to go for right now. I might still opt for B650 but I expect downward price pressure on that. The x670 was always pointless for gaming, that's more like the old Intel HEDT stuff, Skylake-X and the like.

Vulcanproject

Posts: 1,698 +3,210

100-150w less power draw is not a joke when you're in Europe and paying 40 cents per kilowatt hour.

nitebird

Posts: 32 +35

@Techspot I know benchmarks are done with as few background programs as possible to ensure maximum game performance and apples-to-apples comparison, but this is this getting further from my real use case where I am playing a game, but also chatting on Discord, streaming my game, and watching two streams on my second monitor, plus 20 tabs open in Firefox. Even though the gaming performance of the two CPUs are the same, I would assume the 7950X3D would preserve its gaming performance better than the 7800X3D in this multitask gaming scenario. There there a way to quantify this from the benchmarks you show in your article?

MarcusNumb

Posts: 154 +299

Come on guys, this CPU deserves a 90, not just 85 xD

Neatfeatguy

Posts: 1,620 +3,049

@Techspot I know benchmarks are done with as few background programs as possible to ensure maximum game performance and apples-to-apples comparison, but this is this getting further from my real use case where I am playing a game, but also chatting on Discord, streaming my game, and watching two streams on my second monitor, plus 20 tabs open in Firefox. Even though the gaming performance of the two CPUs are the same, I would assume the 7950X3D would preserve its gaming performance better than the 7800X3D in this multitask gaming scenario. There there a way to quantify this from the benchmarks you show in your article?

That's a you problem. The benchmarks are there to give you a guideline on how these systems run programs/games solely based on that alone and not taking into account the billions of different software configurations that everyone has going on their own individual systems.

If you're heavy using your system for certain tasks, build your system best you can to accommodate your needs. Streaming? Make sure you have more cores.

Lots of tabs open in a browser? Make sure you have lots of RAM.

Expecting any tech or youtube channel to do something like you're asking would be a daunting task. Also, once they go down this road, where do we draw the line?

Theinsanegamer

Posts: 5,448 +10,222

Nope. the math simply does not work. At 8 hours per day, 365 days per year, running at full blast the ENTIRE time on that one specific super heavy workload, it's $117 difference in electricity. In that scenario, you are either making money off of that work (in which case that $117 per year isnt even peanuts, OR you should be buying something larger to do more work in the same time) or you are running insane work loads as a hobby, in which case you are going to be wasting far more on buying the hardware in the first place.100-150w less power draw is not a joke when you're in Europe and paying 40 cents per kilowatt hour.

If the price of electricity is a concern, you should not be buying $500+ processors. Period.

passwordistaco

Posts: 572 +1,240

Vulcanproject

Posts: 1,698 +3,210

The 7800X3D is a $450 CPU.Nope. the math simply does not work. At 8 hours per day, 365 days per year, running at full blast the ENTIRE time on that one specific super heavy workload, it's $117 difference in electricity. In that scenario, you are either making money off of that work (in which case that $117 per year isnt even peanuts, OR you should be buying something larger to do more work in the same time) or you are running insane work loads as a hobby, in which case you are going to be wasting far more on buying the hardware in the first place.

If the price of electricity is a concern, you should not be buying $500+ processors. Period.

Math works fine. 4 hours a day it's $60 a year if the difference is calculated at 100w, the minimum amount at load. Probably more though looking at various tests. I keep a CPU in any given machine at least 5 years typically. Over $300 over the life of the CPU in that use case. It pays for an upgrade.

Living costs are high in Europe right now, I am quite sure people will take such a saving and be perfectly happy about it. I would since I just ordered a 7800X3D and not a 13900K.

I would remind you what works or not is not the same for everyone.

nitebird

Posts: 32 +35

That's a you problem.

Both AMD and Intel seem to be heading towards big and small cores in the future, and at least part of this is to ensure maximum performance of the primary application without causing stutters in the background apps. If a review site is able to quantify it, I would be interested in reading about it.

Theinsanegamer

Posts: 5,448 +10,222

Again, you are looking at a $450 CU and worrying about $60 in electricity over 5 years.The 7800X3D is a $450 CPU.

Math works fine. 4 hours a day it's $60 a year if the difference is calculated at 100w, the minimum amount at load. Probably more though looking at various tests. I keep a CPU in any given machine at least 5 years typically. Over $300 over the life of the CPU in that use case. It pays for an upgrade.

Living costs are high in Europe right now, I am quite sure people will take such a saving and be perfectly happy about it. I would since I just ordered a 7800X3D and not a 13900K.

I would remind you what works or not is not the same for everyone.

If that is a concern for you, you do not need a $450 gaming CPU. Gaming is a wasteful hobby. Go get an i3 for $100, it plays all the same games.

It's still not a selling point. You're spending another $350 over an i3 to save a hypothetical $60 over 5 years over an i9. That is terrible math.

Neatfeatguy

Posts: 1,620 +3,049

So...how? What are the parameters?Both AMD and Intel seem to be heading towards big and small cores in the future, and at least part of this is to ensure maximum performance of the primary application without causing stutters in the background apps. If a review site is able to quantify it, I would be interested in reading about it.

Maybe you use some third party software to manage RGB. Someone else doesn't. Someone uses iCUE. Other's don't.

Maybe you like Chrome and always have 30 tabs open. Someone else doesn't. Someone else likes Opera and only has 10 tabs open at once.

Maybe you stream at 30fps at 1080p. Maybe someone else does so at 60fps 1080p. Other's don't stream.

Maybe someone constantly has a couple of VM running on their system and other's don't.

Maybe you keep Steam and EGS running when your system starts. Someone else only uses GoG Galaxy that runs constantly. Maybe someone else runs all of them, plus Origin and Uplay. Maybe someone else uses these programs, but only opens them when they need to. Maybe someone else doesn't use them ever.

Maybe you use Discord's app, maybe someone else uses Discord through the web browser. Maybe someone uses TeamSpeak.

Any tech site that wants to take on a challenge like this, they'd only be left to criticism because they'd constantly be told they're not doing enough or not using the right programs or they're using programs that aren't common or.....

You see the issue here? You won't make everyone happy and there are too many combinations of different software and hardware. Why open yourself to the criticism off all the whinyassbabies out there just because they don't like how you setup your test system? The headache isn't worth it.

godrilla

Posts: 1,365 +893

If you game at 4k the performance advantage over the 7700x ( which can easily oc to 5.5 ghz to 5.65 ghz oc cores on air) is not worth the price premium imo. You can add $47 currently and get Ryzen 7 7700X, MSI B650-P Pro WiFi, G.Skill Flare X5 Series 32GB DDR5-6000 Kit, Computer Build Combo

$749.96 SAVE $253.30

$496.66 at microcenter before the 5% membership discount fyi.

$749.96 SAVE $253.30

$496.66 at microcenter before the 5% membership discount fyi.

Vulcanproject

Posts: 1,698 +3,210

Again, you are looking at a $450 CU and worrying about $60 in electricity over 5 years.

If that is a concern for you, you do not need a $450 gaming CPU. Gaming is a wasteful hobby. Go get an i3 for $100, it plays all the same games.

It's still not a selling point. You're spending another $350 over an i3 to save a hypothetical $60 over 5 years over an i9. That is terrible math.

I don't need a gaming CPU, i3 or otherwise. The nutritious value of silicon is not great. However what we desire is by implication something we can't have without qualification, otherwise we would already have it. Very little of what you might want in life is free.

Therefore making the things we want a better financial proposition is of at least some value, no? I wanted a fast car, does that then mean fuel economy or other running costs no longer matter at all and are not worthy of any consideration?

You COULD buy a beater or a bus ticket. You COULD walk. Many prefer not to. That's still personal choice.

In the end I never claimed running costs as the primary reason one would choose a 7800X3D over a 13900K. I do certainly see it as one factor worthy of consideration.

nitebird

Posts: 32 +35

It's up to Techspot, they are the professionals... My off-the-cuff idea would be to use a stress tester with a "compatible workload" that you can set the number of threads/ops per sec requested, and see how much work you can squeeze out of a CPU with no more than a 10% drop in FPS in a game. This would give me a rough idea of the "leeway" left in a CPU while gaming. I wouldn't test every game, maybe 2 or 3 with moderate to high CPU usage to create a compare and contrast. Don't ask me for more than this, I am here because I don't know.So...how? What are the parameters?

godrilla

Posts: 1,365 +893

actually if they are worried about power consumption the 7600x came close in power usage and at 1440p and 4k will have negligible performance delta in real world which saves you hundreds of dollars.Again, you are looking at a $450 CU and worrying about $60 in electricity over 5 years.

If that is a concern for you, you do not need a $450 gaming CPU. Gaming is a wasteful hobby. Go get an i3 for $100, it plays all the same games.

It's still not a selling point. You're spending another $350 over an i3 to save a hypothetical $60 over 5 years over an i9. That is terrible math.

for eg.

AMD Ryzen 5 7600X, MSI B650-P Pro WiFi, G.Skill Flare X5 16GB DDR5-5600 Module, Computer Build Combo

$533.96 SAVE $133.97

$399.99 at microcenter

Plus you still have an upgrade path and the money saved can be applied to a better gpu. Linus tech tips paired the 7800x3d with a 6700xt

DSirius

Posts: 788 +1,613

Great review Steve, thank you.

I would add that you should take into consideration the price of the cooler too, because a liquid cooler for 13900K "oven" is more expensive than a cooler for the Ryzen 7800X3D. Liquid cooler for 13900K is a must, otherwise the performance is falling down both in productivity and gaming.

The real cost for CPU+MB+RAM+Cooler of Ryzen gaming system is simple better than those from Intel. Though Intel can take comfort in winning the 1st place and the crown for the most power hungry processor.

What I like from Intel 13900K is the price. Intel found the best price for 13900K to fit it between AMD processors to be appealing for buyers if you do not pay attention for the real cost of CPU+MB+RAM+COOLER.

Real competition is good, and fair competition would be great.

P.S. For Techspot Editors, stuff: I find 85 points Techspot score for Ryzen 7800X3D less accurate or debatable. You gave 90 points to Intel 13600K which is clear an inferior product. Can you explain why? Can you share with us the methodology for scoring and show it as an exemple for 13600K and 7800X3D?

Personally I would give at least 90 points to 7800X3D too

I would add that you should take into consideration the price of the cooler too, because a liquid cooler for 13900K "oven" is more expensive than a cooler for the Ryzen 7800X3D. Liquid cooler for 13900K is a must, otherwise the performance is falling down both in productivity and gaming.

The real cost for CPU+MB+RAM+Cooler of Ryzen gaming system is simple better than those from Intel. Though Intel can take comfort in winning the 1st place and the crown for the most power hungry processor.

What I like from Intel 13900K is the price. Intel found the best price for 13900K to fit it between AMD processors to be appealing for buyers if you do not pay attention for the real cost of CPU+MB+RAM+COOLER.

Real competition is good, and fair competition would be great.

P.S. For Techspot Editors, stuff: I find 85 points Techspot score for Ryzen 7800X3D less accurate or debatable. You gave 90 points to Intel 13600K which is clear an inferior product. Can you explain why? Can you share with us the methodology for scoring and show it as an exemple for 13600K and 7800X3D?

Personally I would give at least 90 points to 7800X3D too

Last edited:

Neatfeatguy

Posts: 1,620 +3,049

If TS is daring enough to take on the backlash of crybabies out there to try and do this, that's on them. I know another big tech said said they wouldn't do this because of all the millions/billions of different parameters out there and that you couldn't make everyone happy and they refused to be a sounding board for those that cry/complain about tests not being done with their software and usage.It's up to Techspot, they are the professionals... My off-the-cuff idea would be to use a stress tester with a "compatible workload" that you can set the number of threads/ops per sec requested, and see how much work you can squeeze out of a CPU with no more than a 10% drop in FPS in a game. This would give me a rough idea of the "leeway" left in a CPU while gaming. I wouldn't test every game, maybe 2 or 3 with moderate to high CPU usage to create a compare and contrast. Don't ask me for more than this, I am here because I don't know.

You should be able to take into consideration what the reviews do show you and extrapolate some kind of performance you could expect to have.

Really, this is how it plays out for what hardware you should be looking for:

If you want to stream, get more cores.

If you want to have RAM heavy tasks going, get more RAM.

If you want all the eyecandy in games, get a top-end GPU with plenty of VRAM.

You're not going to get a useful answer from a tech site trying to appease a few people with a "normal" system workload as they test games. A "normal" system workload isn't the same for everyone. Even when tech sites are doing barebone testing people still complain about things such as they didn't use the "correct" RAM, or they didn't overclock enough, or they didn't use an AIO cooler, or they didn't test FSR/DLSS correctly in a way they wanted it done and so on.

I personally wouldn't want TS or any other tech site to be punished more by the public, they're criticized enough already just from doing these reviews, while trying to do a review using a "compatible workload".

nitebird

Posts: 32 +35

It's certainly not easy. I'm sure TS receives many requests, so even if mine is read I expect a decision based on cost versus benefit like all businesses. Thanks for weighing in.I personally wouldn't want TS or any other tech site to be punished more by the public, they're criticized enough already just from doing these reviews, while trying to do a review using a "compatible workload".

Both AMD and Intel seem to be heading towards big and small cores in the future, and at least part of this is to ensure maximum performance of the primary application without causing stutters in the background apps. If a review site is able to quantify it, I would be interested in reading about it.

TS and other tech experts have done these tests many many many times and the result is always the same: these background tests have zero impact whatsoever on gaming performance. They are parked and do nothing.

Also nothing scales past 6 cores, and even the most multi-threaded games have 85% of their workload on 2 cores. The remaining 15% can scale up to 6 and then there is nothing beyond that.

Not only are e-cores worthless for gaming and "background tasks", you don't even need more than 8 cores period. Really you can get by with 6 but sure, fine, because of the "background tasks" give them those extra 2 cores to work with.

nitebird

Posts: 32 +35

The game I am building for, Star Citizen, uses all 8 P-cores of my 12700K@5.2Ghz at 100% at certain locations. If I run additional streaming background apps, there is another 20% FPS penalty. My 4090 is not the bottleneck as the utilization is 40-50% in those CPU bottlenecked scenes. Can you tell me if the 7800X3D or 7950X3D would be better or equal for my use? Or does my situation not exist because nothing uses 6 cores?Also nothing scales past 6 cores, and even the most multi-threaded games have 85% of their workload on 2 cores. The remaining 15% can scale up to 6 and then there is nothing beyond that.

You should be building a system to do the things that you want/need to do now AND for the next few years. While gaming at higher resolutions now will usually keep you GPU limited, after a few years newer games will be CPU limited as well. Why not spend a little bit more now to push back the time when the CPU limit appears at higher resolutions.actually if they are worried about power consumption the 7600x came close in power usage and at 1440p and 4k will have negligible performance delta in real world which saves you hundreds of dollars.

for eg.

AMD Ryzen 5 7600X, MSI B650-P Pro WiFi, G.Skill Flare X5 16GB DDR5-5600 Module, Computer Build Combo

$533.96 SAVE $133.97

$399.99 at microcenter

Plus you still have an upgrade path and the money saved can be applied to a better gpu. Linus tech tips paired the 7800x3d with a 6700xtwhile the money saved could get you the 6950XT at $549.99 with cpu Combo at microcenter and see almost double performance in rasterization.

Also, 2023 games are already wanting 32GB of RAM. Building at 16GB is likely to be unwise.

Who comes up with these scores? There must be an AMD penalty that takes 5-10 points off.

daffy duck

Posts: 250 +190

Meh, so much love for this. It barely makes a difference in the real world in the majority of games, is much slower for productivity and in many cases only uses a bit less power than the 7700X, Sure in some cases it's much better, but the loss of clock speeds is too much IMO for other stuff and look at those games where L3 cache isn't important. I honestly couldn't care less about the difference between 250fps and 280fps and the gains on a lesser cards than the 4090 will be smaller. 7700X is already a terrific gaming cpu.

godrilla

Posts: 1,365 +893

Ah yes the 7700x is also a good option for eg.You should be building a system to do the things that you want/need to do now AND for the next few years. While gaming at higher resolutions now will usually keep you GPU limited, after a few years newer games will be CPU limited as well. Why not spend a little bit more now to push back the time when the CPU limit appears at higher resolutions.

Also, 2023 games are already wanting 32GB of RAM. Building at 16GB is likely to be unwise.

Ryzen 7 7700X, MSI B650-P Pro WiFi, G.Skill Flare X5 Series 32GB DDR5-6000 Kit, Computer Build Combo by adding $47

$749.96 SAVE $253.30

$496.66 at microcenter before 5% discount ( don't you love to support brick and morter locals. )

Which can easily oc to 5.55gh to 5.65 ghz on air.

questions?

Similar threads

- Locked

- Replies

- 28

- Views

- 545

- Locked

- Replies

- 60

- Views

- 2K

Latest posts

-

Police arrest high school athletic director for deepfaking principal's voice

- Cal Jeffrey replied

-

Ford is losing boatloads of money on every electric vehicle sold

- letsgoiowa replied

-

Microsoft releases MS-DOS 4.0 source code and floppy images through an open-source license

- Alfonso Maruccia replied

-

TechSpot is dedicated to computer enthusiasts and power users.

Ask a question and give support.

Join the community here, it only takes a minute.