In context: The sudden rise and advancement of artificial intelligence systems over the last few months have brought fears of its potentially harmful effects on society. Not only might AI threaten human jobs and creativity, but smart machines' use in warfare could have catastrophic consequences. To address this danger, the first global Summit on Responsible Artificial Intelligence in the Military Domain (REAIM) was held last week, leading to countries signing an agreement to put the responsible use of AI higher on the political agenda.

Co-hosted by the Netherlands and South Korea last week at The Hague, the REAIM conference was attended by representatives from over 60 countries, including China. Ministers, government delegates, think tanks, and industry/civil organizations participated in the talks. Russia was not invited to take part, while Ukraine did not attend.

The call for action signed by all attendees apart from Israel confirmed that nations were committed to developing and using military AI in accordance with "international legal obligations and in a way that does not undermine international security, stability, and accountability."

US Under Secretary of State for Arms Control Bonnie Jenkins called for the responsible use of AI in military situations. "We invite all states to join us in implementing international norms, as it pertains to military development and use of AI" and autonomous weapons, said Jenkins. "We want to emphasize that we are open to engagement with any country that is interested in joining us."

China representative Jian Tan told the summit that countries should "oppose seeking absolute military advantage and hegemony through AI" and work through the United Nations.

Other issues signatories agreed to address include the reliability of military AI, the unintended consequences of its use, escalation risks, and the way humans need to be involved in the decision-making process.

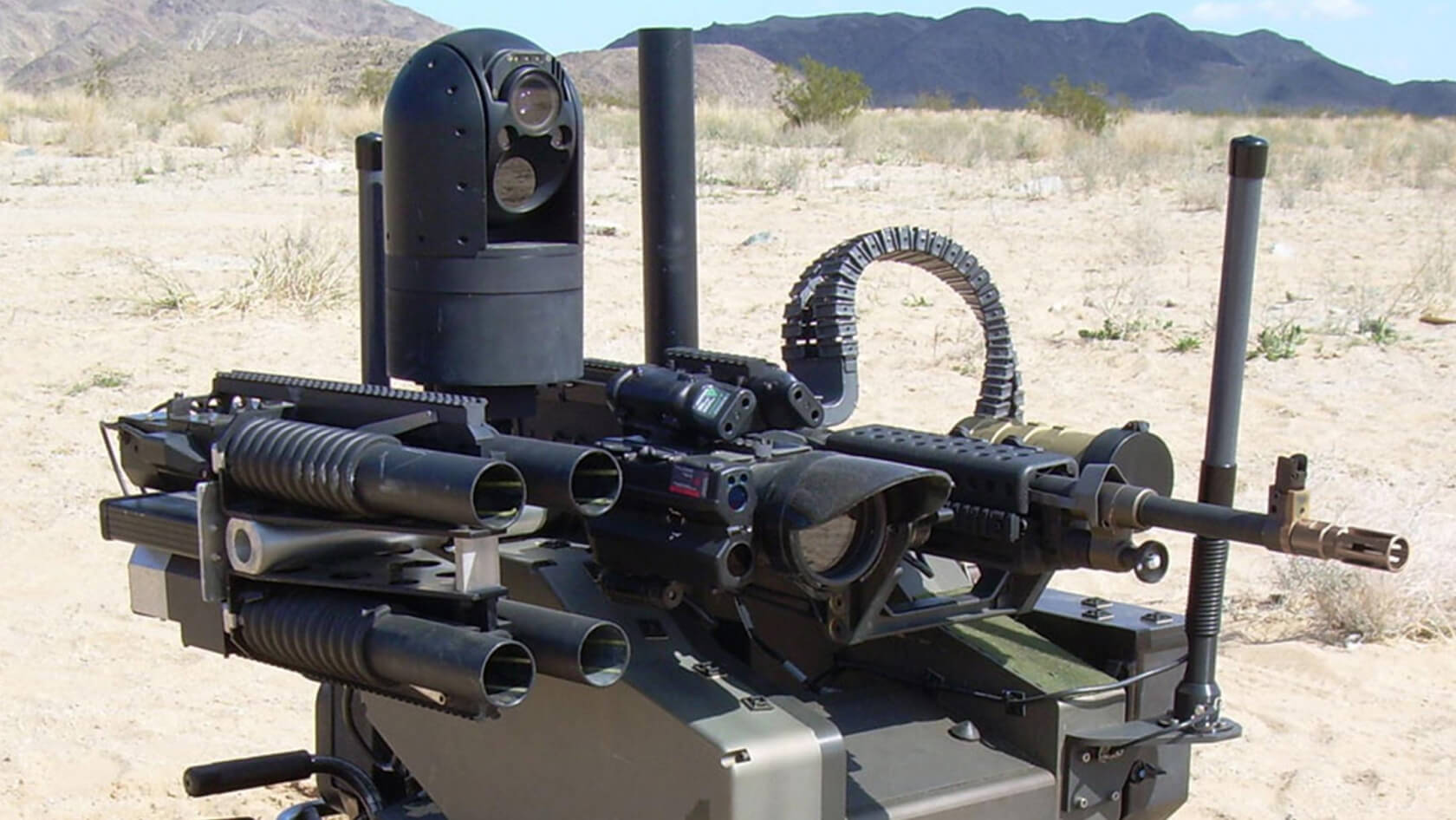

In 2019, the DoD said humans will always have the final say on whether autonomous weapons systems open fire on live targets. As for those unintended consequences mentioned in the statement, some fear India's push into AI-powered military systems could lead to a nuclear war with Pakistan through the increased risk of pre-emptive strikes.

Some attendees did note the benefits of using AI in conflict, especially in Ukraine, where machine learning and other technology has been used to fend off a larger, more powerful aggressor.

"Imagine a missile hitting an apartment building," said Dutch deputy prime minister Wopke Hoekstra. "In a split second, AI can detect its impact and indicate where survivors might be located. Even more impressively, AI could have intercepted the missile in the first place. Yet AI also has the potential to destroy within seconds."

Critics say the statement isn't legally binding and fails to address many other concerns around AI's use in military conflicts, including AI-guided drones.

More fears over AI's many potential applications in war were raised last week after Lockheed Martin revealed its new training jet was flown by artificial intelligence for over 17 hours, marking the first time that AI has been engaged in this way on a tactical aircraft. Elsewhere, former Google CEO and Alphabet chair Eric Schmidt said that artificial intelligence could have a similar effect on wars as the introduction of nuclear weapons.