GPU Pricing Update, Year in Review: Price Trends Charted

Welcome to our last GPU pricing update of the year. We'll discuss upcoming GPUs, price trends for the last 12 months, and recommendations about buying a graphics card now or if it's better to wait.

AMD patent potentially describes future GPU chiplet design

Be careful who's selling you that high-end AMD Radeon GPU, especially on Amazon

Path Tracing vs. Ray Tracing, Explained

#TBT Every few years it seems like there's an amazing new technology with the promise of making games look ever more realistic. We've had shaders, tessellation, shadow mapping, ray tracing – and now there's path tracing.

Black Friday GPU Buying Guide: November GPU Pricing Update

For this month's GPU pricing update, we have Nvidia's upcoming GeForce 40 Super rumors, current-gen GPU pricing, and what sort of deals you should be looking out for during Black Friday.

Radeon 7900M trades blows with laptop RTX 4090 in Vulkan benchmarks

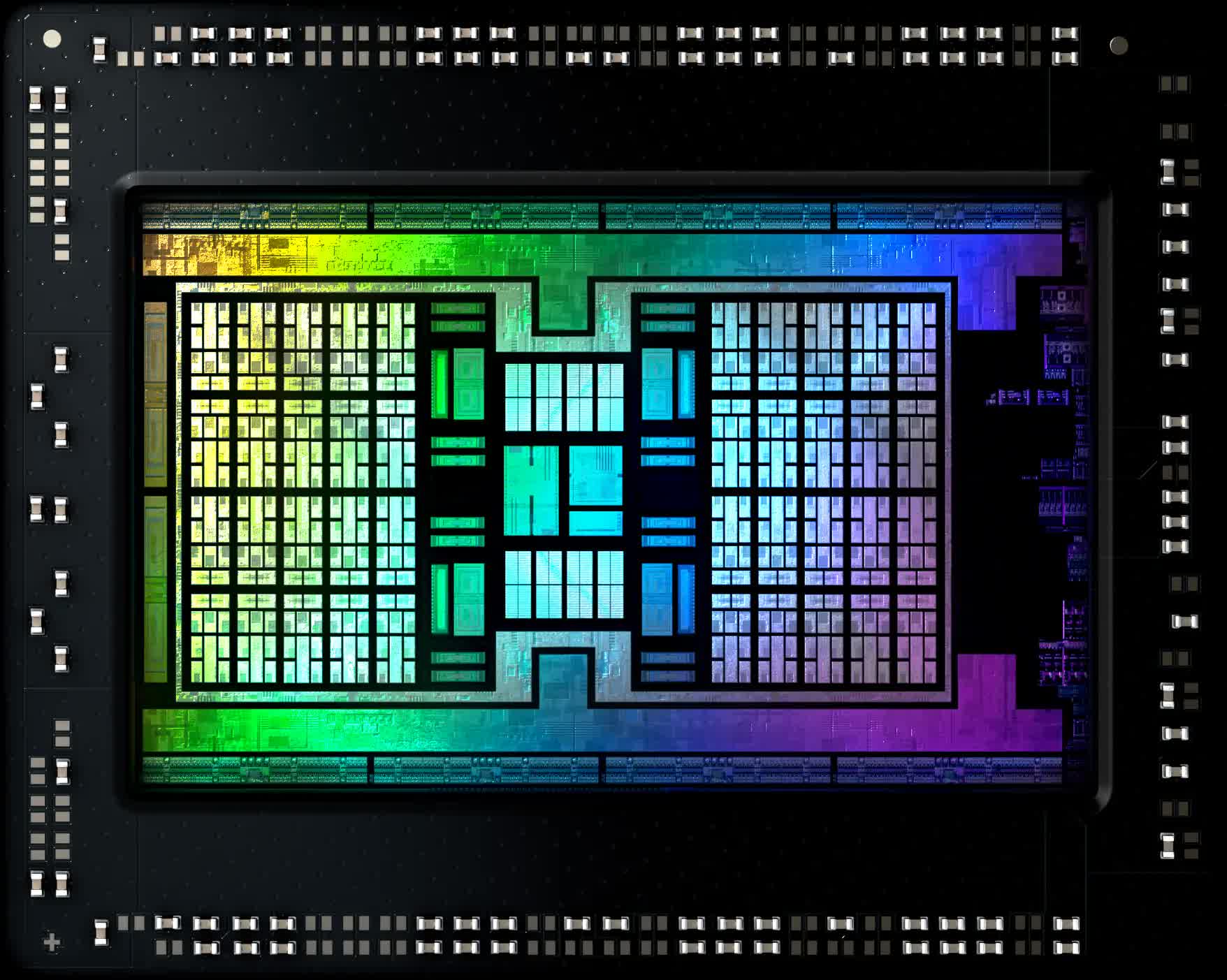

4 Years of AMD RDNA: Another Zen or a New Bulldozer?

It's been four years since AMD launched RDNA, the successor to the venerable GCN graphics architecture. We take a look through the tech and numbers to see just how successful it's been.

Are GPU Prices Going Up Now? October GPU Pricing Update

Welcome to our monthly GPU pricing update. There's bits of news to go through, a few odd GPU launches, and some unfortunate price hikes. There's also some positive GPU price adjustments to discuss.

AMD FSR 3 Frame Generation Analyzed: DLSS 3 Contender? Not So Fast

AMD's FSR 3 frame generation technology made a surprise debut earlier this month in two games, and we're ready to give you an early look, covering frame pacing, image quality and latency.

AMD Radeon RX 7900 XTX vs. Nvidia GeForce RTX 4080: The Re-Review

With the release of new drivers and new games, plus the request of many readers, it's time for an updated look at the Radeon RX 7900 XTX vs. GeForce RTX 4080 rivalry with fresh benchmarks.

Cyberpunk 2077: Phantom Liberty GPU Benchmark

The Phantom Liberty update brings significant updates to Cyberpunk 2077, including DLSS 3.5, further graphics and hardware support enhancements. It's GPU benchmark time.

Nvidia Responds So AMD Can't Win: September GPU Pricing Update

Welcome back to our monthly GPU pricing update. So, the GPU market still kind of sucks, but there's a silver lining with new launches and some price adjustments improving things a little this month.

Retailers discount Nvidia GeForce RTX 4070 to $549

GeForce RTX 4070 vs. Radeon RX 7800 XT: 45 Game Benchmark

In this 45 game benchmark shootout we put the $600 GeForce RTX 4070 and the $500 Radeon RX 7800 XT head-to-head to see which comes up as the ultimate mid-range GPU winner.

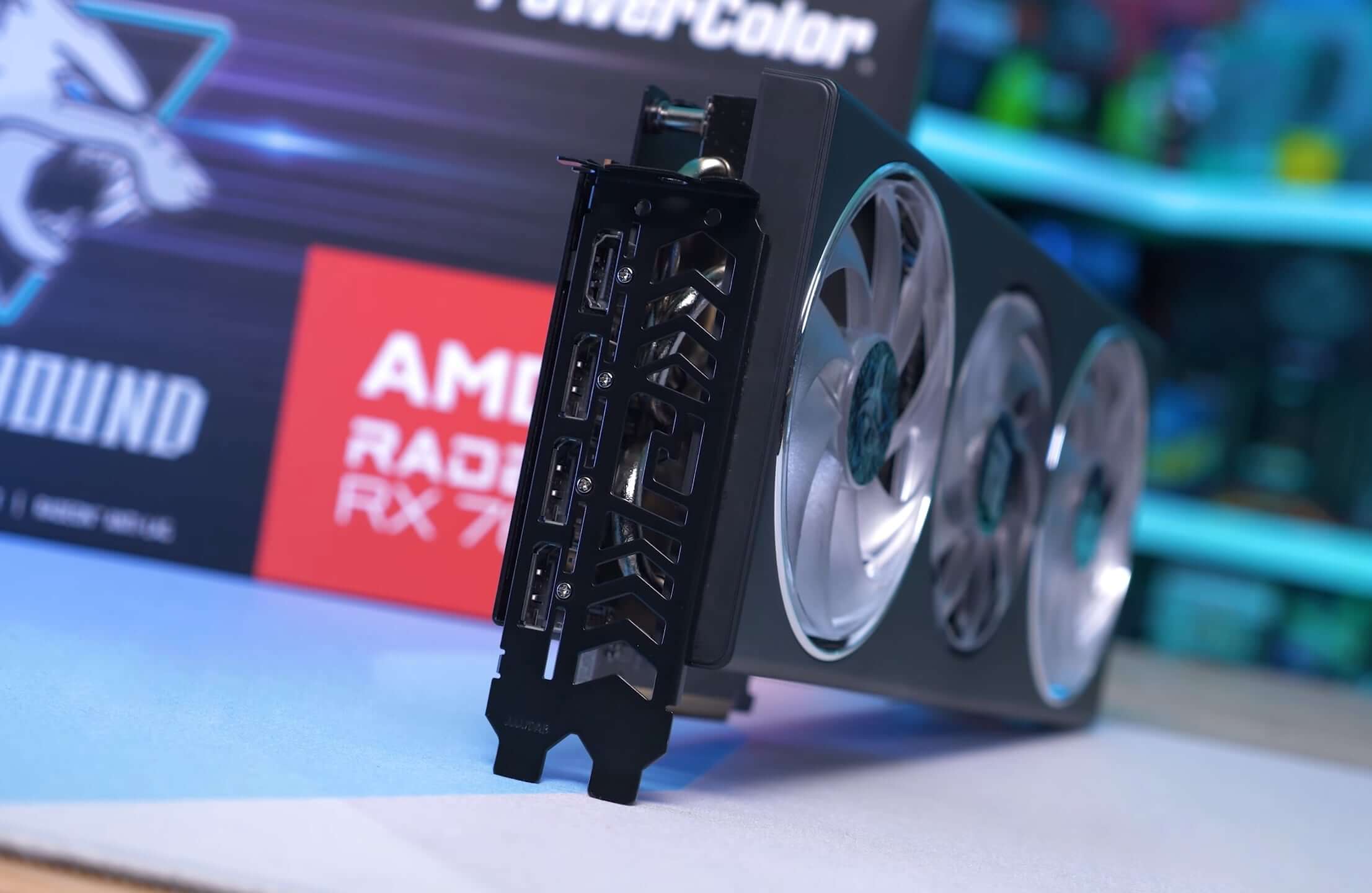

Radeon RX 7700 XT Review: AMD's latest mid-range GPU

The Radeon RX 7700 XT is AMD's latest mid-range GPU targeting the $450 price point. However, even on paper we can anticipate a few issues with the new card.

Latest AMD Adrenaline drivers add Radeon Anti-Lag+, Boost and HYPR-RX

AMD Radeon RX 7800 XT Review: Old Performance, New Price Point

Given that the Radeon 7800 XT mirrors the 6800 XT's performance, it's not particularly thrilling, especially after 3 years. But at just $500, the 7800 XT will likely become the go-to option for many.

Starfield GPU Benchmark: 32 GPUs Tested, FSR On and Off

Bethesda's latest RPG brings with it some space opera vibes and striking visuals. Let's take a look at Starfield's GPU performance by testing 32 GPUs using different quality presets, resolutions and FSR.

AMD's new Radeon drivers boost Starfield performance up to 16%

Is AMD finally targeting Nvidia? Radeon RX 7800 XT & RX 7700 XT launch details, FSR 3, and more

GPU Prices Drop Even Further, Sort Of - New Radeons Incoming

It's time for our monthly GPU pricing update. Although there's been no major releases to shake things up, new AMD Radeons are coming soon, meanwhile there's been some price movement across new and old-gen GPUs.

AMD Radeon RX 7900 GRE: The RDNA 3 GPU You Can't Really Buy

We take a look at the new Radeon RX 7900 GRE or "Golden Rabbit Edition," an odd name for a gaming graphics card. This is going to be sold mostly in China, but maybe you can get them elsewhere, too.