The Ryzen 9 7900X3D boasts 12 cores, 24 threads, a 120W TDP, 140MB cache, and can boost all the way up to 5.6 GHz. An excellent performer, its biggest drawback was pricing, but dropped from $600 to $409, it makes for a far more compelling comparison.

AMD Ryzen CPUs are impacted by all of these serious vulnerabilities

A hot potato: All users with AMD Ryzen processors from the last few years should check and update their motherboard firmware ASAP, especially if they haven't done so since before 2023. AMD has published a detailed chart describing four severe security issues affecting server, desktop, workstation, HEDT, mobile, and embedded Zen CPUs. Recent BIOS updates have addressed most, but not all of the flaws.

Intel Core i9-14900KS leak reveals 6.2 GHz boost clock and over 400W of power consumption

It will be the flagship Raptor Lake Refresh CPU

Microsoft to tighten Windows 11 hardware requirements in upcoming update, impacting users of vintage PCs

For those who managed to get Windows 11 running on 15-year-old PCs

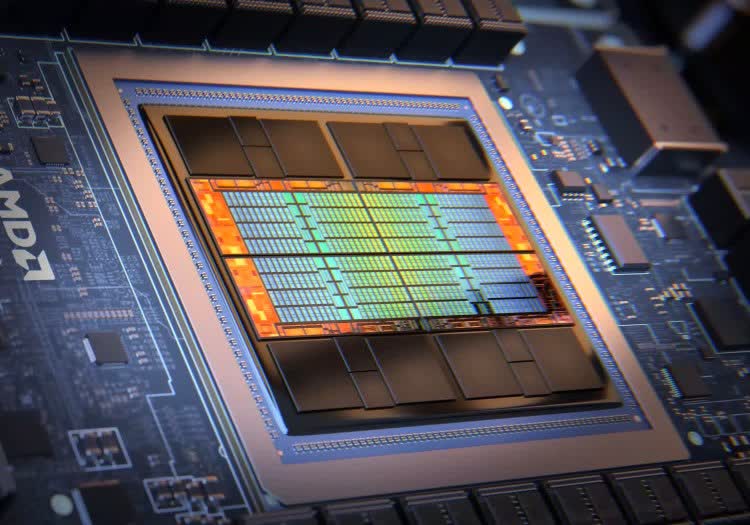

Nvidia's Grace Hopper GH200 superchip benchmarked, holds up well against AMD and Intel competition

Nvidia's initial claims about GH200's performance hold up for the most part

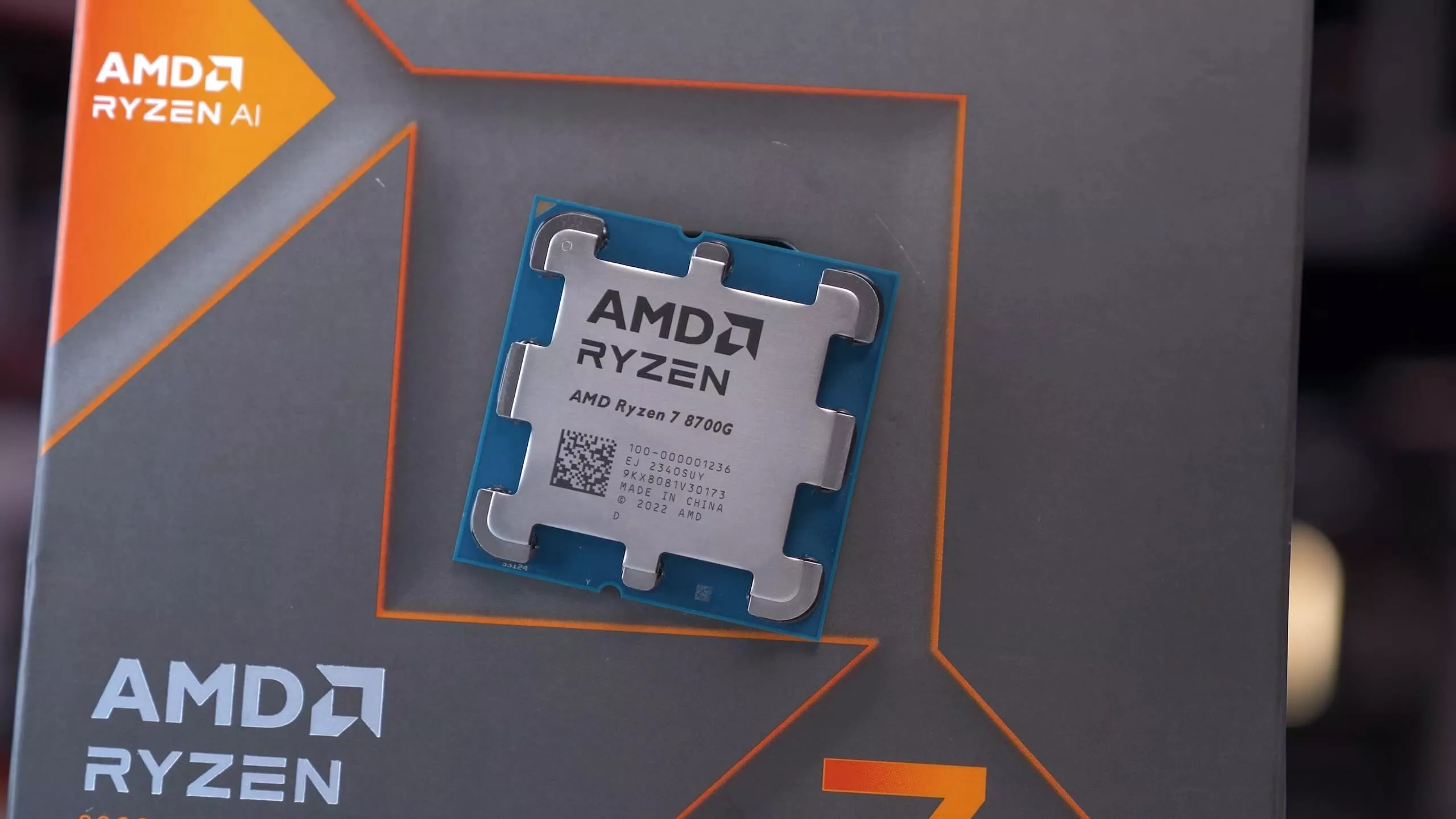

Overclocking the Ryzen 7 8700G's integrated Radeon 780M graphics

Not a ton of overclocking headroom on the iGPU

AMD sees surge in CPU revenue share, thanks to Epyc and Ryzen processor demand

Taking the fight to Intel

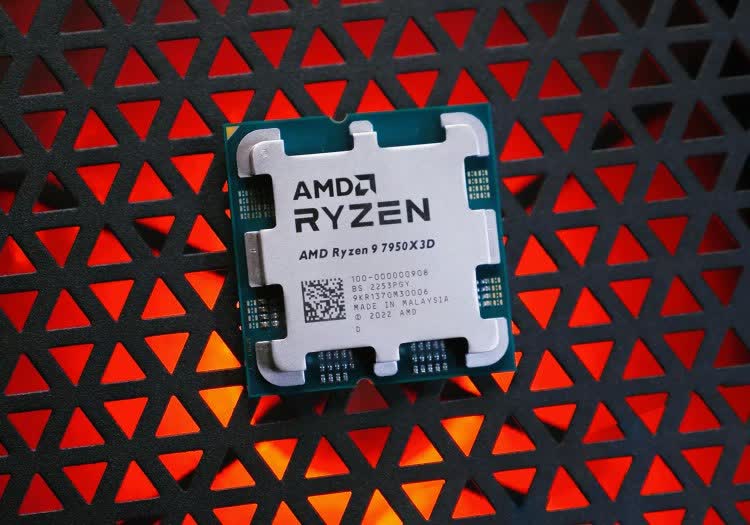

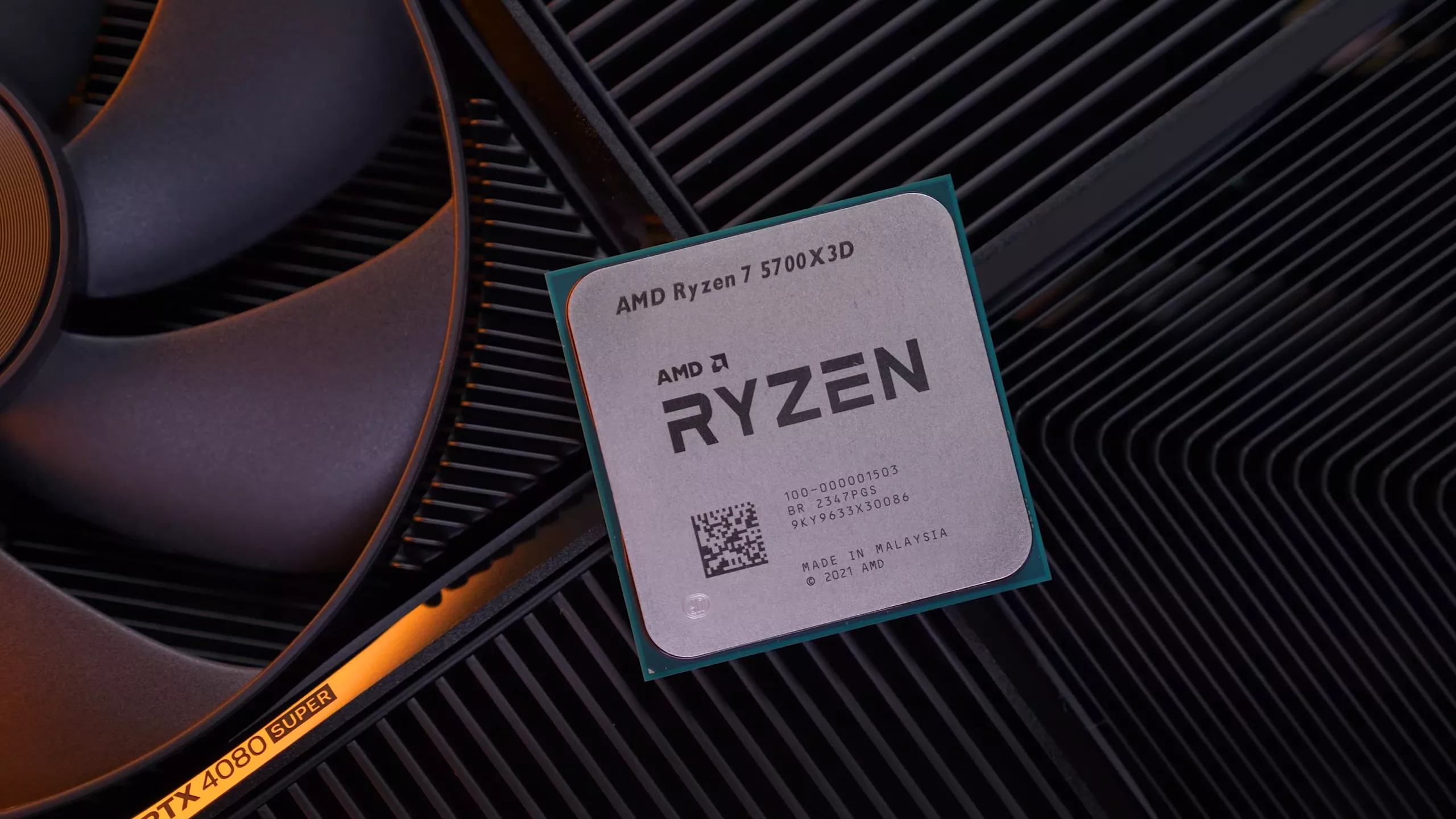

AMD Ryzen 7 5700X3D Review: 3D V-Cache for $250

AMD's Ryzen 7 5700X3D, while technically new, is not really so. What we have here is binned silicon that couldn't be sold as a 5800X3D, but instead of throwing it away, it's been cut down and offered for just $250.

AMD hid a fun Easter egg on its Athlon K7 CPU that went undiscovered until now

Easter eggs are more fun when they aren't immediately discovered

Neat: Hardware photographer Fritzchens Fritz recently stumbled across a fascinating Easter egg when examining an Athlon Classic CPU from AMD. When inspecting an Athlon K7 under magnification, Fritz discovered a logo of the state of Texas in one corner, right above a revolver firing a bullet. It is believed the state is a nod to Austin where AMD has some facilities, but the gun could mean anything (perhaps AMD firing a shot at Intel?).

AMD is set to bring the heat with Zen 5, Ryzen 9000, and new X870E motherboards

In context: With mandatory USB4 support coming to AMD's next-gen X870E chipsets, upgrading to Zen 5 Ryzen 9000 CPUs could get pricey. The new connectivity standard requires an extra controller chip, which vendors will likely pass on as higher motherboard costs. Be ready to budget more than expected if you're planning a Zen 5 build.

PC CPU shipments grew nearly 22% in Q4 amid robust laptop and desktop sales

The growth in CPU shipments mirrors the recovery in the global PC market

Intel may be working on new "Bartlett Lake-S" budget CPUs for the LGA 1700 platform

Bartlett Lake-S could be a lucrative upgrade option for Alder Lake users on a budget

Lamptron unveils CPU cooler with Full HD display that doubles as second screen

Adding style to your air cooling needs

Intel Xeon W9-3595X Geekbench listing reveals 60 cores, 120 threads and 4.6GHz base clock

The Xeon W9-3595X is expected to be the flagship Sapphire Rapids chip

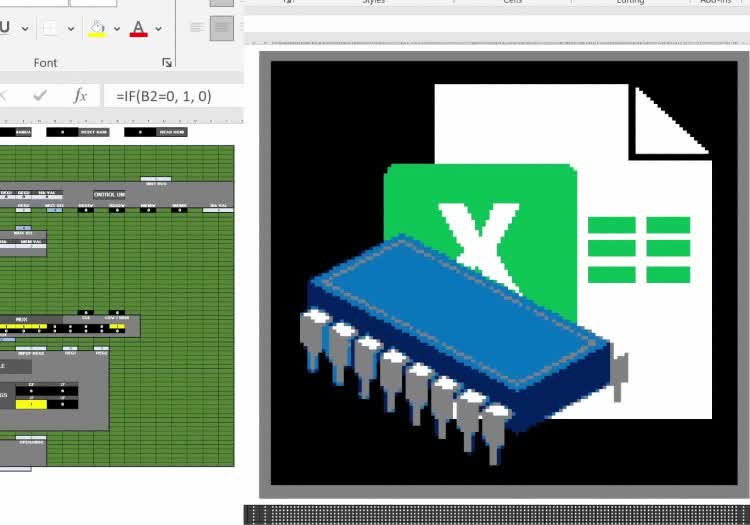

Hobbyist builds fully functioning 16-bit CPU in Microsoft Excel

Turns out, Excel is a lot more interesting than we ever imagined

In a nutshell: In an impressive display of creativity and technical finesse, a hobbyist has managed to build a fully functioning 16-bit CPU entirely within Microsoft Excel. The project provides a unique hands-on way to explore low-level computing concepts and highlights Excel's flexibility beyond boring spreadsheets, letting anyone download and tinker with a miniature computer architecture.

Intel says major AI boost is coming in Panther Lake CPUs, on top of Arrow and Lunar Lake chip improvements

Panther Lake is expected to debut in 2025

AMD Ryzen 7 8700G Review: Most Powerful Integrated Graphics

The Ryzen 7 8700G is a Zen 4, Ryzen 7000-class processor that also includes a Radeon 700M GPU on the same chip. AMD says the iGPU will be powerful enough to play pretty much any PC game at 1080p.

AMD Ryzen 9000 Zen 5 CPU specs and launch timeframe revealed in latest leak

AMD's next-gen CPUs will compete with Intel's Arrow Lake-S lineup

Ryzen 9 5950X going for the all-time low price of $364

AMD's Ryzen 9 5950X is a top CPU choice for those who want to play and work hard – especially if haven't jumped into the AM5 platform yet.

The Best CPU Coolers - Early 2024

How should you keep your CPU cool? The more powerful your CPU is, the bigger and better cooler you'll need, but rest assured our guide will cover many great options at every size and price point.

Intel 15th-gen Core 'Arrow Lake' might support 120 Gbps Thunderbolt 5 and 20 PCIe 5.0 lanes

New Intel processors and Thunderbolt 5 are coming later in 2024

Intel's Arrow Lake-S desktop CPUs could ditch Hyper-Threading after more than two decades

Hyper-Threading was originally introduced by Intel in its single-core Xeon and Pentium 4 processors

Intel Granite Rapids Xeon CPUs could ship with up to 480MB of L3 cache

Granite Rapids Xeons are expected to hit the market in 2H 2024

What just happened? Intel's next-gen Xeon CPUs, codenamed Granite Rapids, could come with a massive cache upgrade compared to their predecessors. While the high-end Emerald Rapids SKUs ship with up to 320MB of L3 cache, the leading Granite Rapids chips are said to offer a whopping 480MB of L3 cache.

Dual-core "Intel 300" CPU disappointing benchmarks prove modern games need more cores

Even cheap quad-core processors fare better with AAA games